LUART — Amphibious Robotic Turtle

Surrogate compliance modeling enables reinforcement-learned locomotion gaits for a soft-rigid hybrid turtle robot

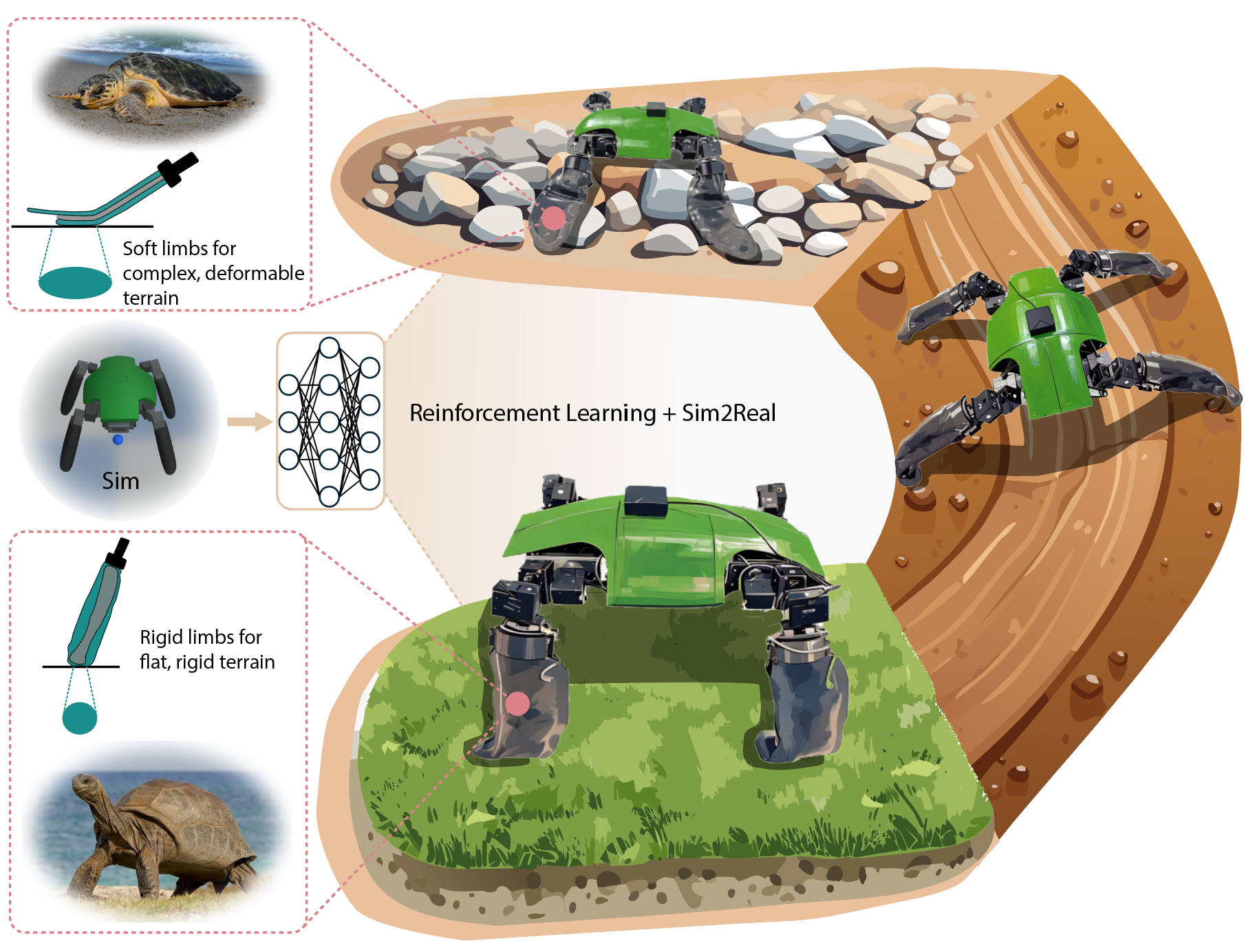

This project is joint work I led with Jue Wang at Yale’s Faboratory (Prof. Rebecca Kramer-Bottiglio), building LUART — a Learning Untethered Amphibious Robotic Turtle. The story is simple: one turtle-inspired quadruped whose limbs can switch between a rigid “tortoise leg” and a soft “sea-turtle flipper,” and a single RL policy that learns to ride that soft-hard morphology change and pick the right gait for whatever ground is underneath.

The key trick is that we train everything in a rigid-body simulator. Soft-body physics is too expensive for large-scale RL, so instead of simulating the compliant limb directly we treat its effective length and center of mass as parameters and randomize them hard during training. The resulting policy is robust to the real deformation the physical limb undergoes — and transfers to hardware without further tuning. On top of that same policy, changing pouch pressure and body-height command is enough to move between walking and crawling, and between rigid and soft limb modes, across flat ground, gravel, mud, and grass.

LUART concept: one robot, two limb modes — rigid columnar legs for flat terrain, compliant flippers for gravel, mud, and grass — with a single RL-trained policy that adapts.

Sim-to-real comparison

Side-by-side of the learned policy in Genesis vs. the physical robot on flat ground. Velocity-mapping error is below 6% across walking, backward walking, lateral motion, and in-place turning.

Gait and limb-mode switching

A single policy handles the transition between upright walking (rigid limbs, inflated pouch) and low-profile crawling (soft limbs, deflated pouch) by changing only the body-height command.

Multi-terrain field tests

LUART navigating concrete, mud, grass, gravel, and streambed terrain in field trials at Yale and East Rock Park. Adaptive limb/gait switching keeps cost of transport roughly half of pure rigid-limb crawling across all terrains.

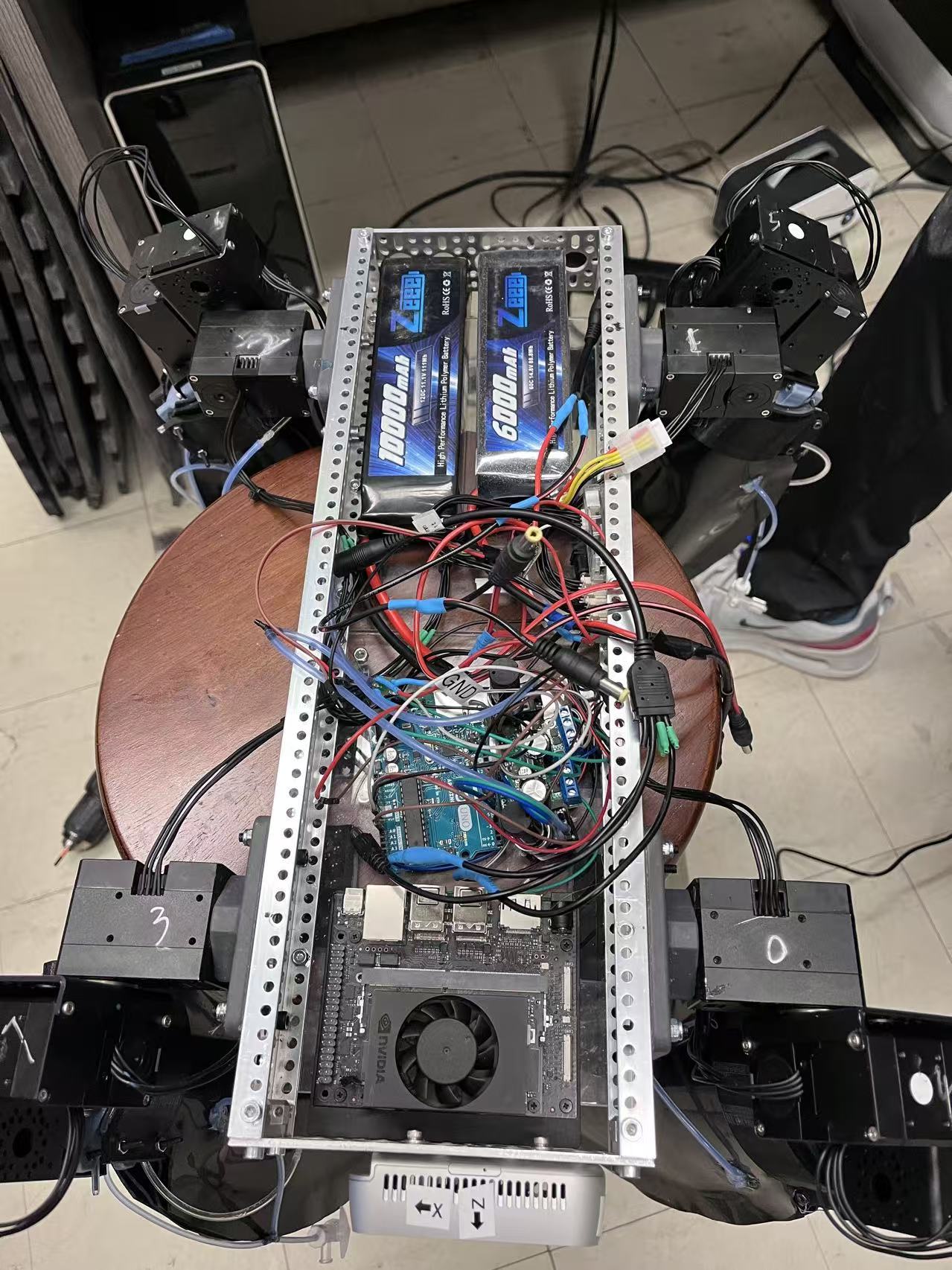

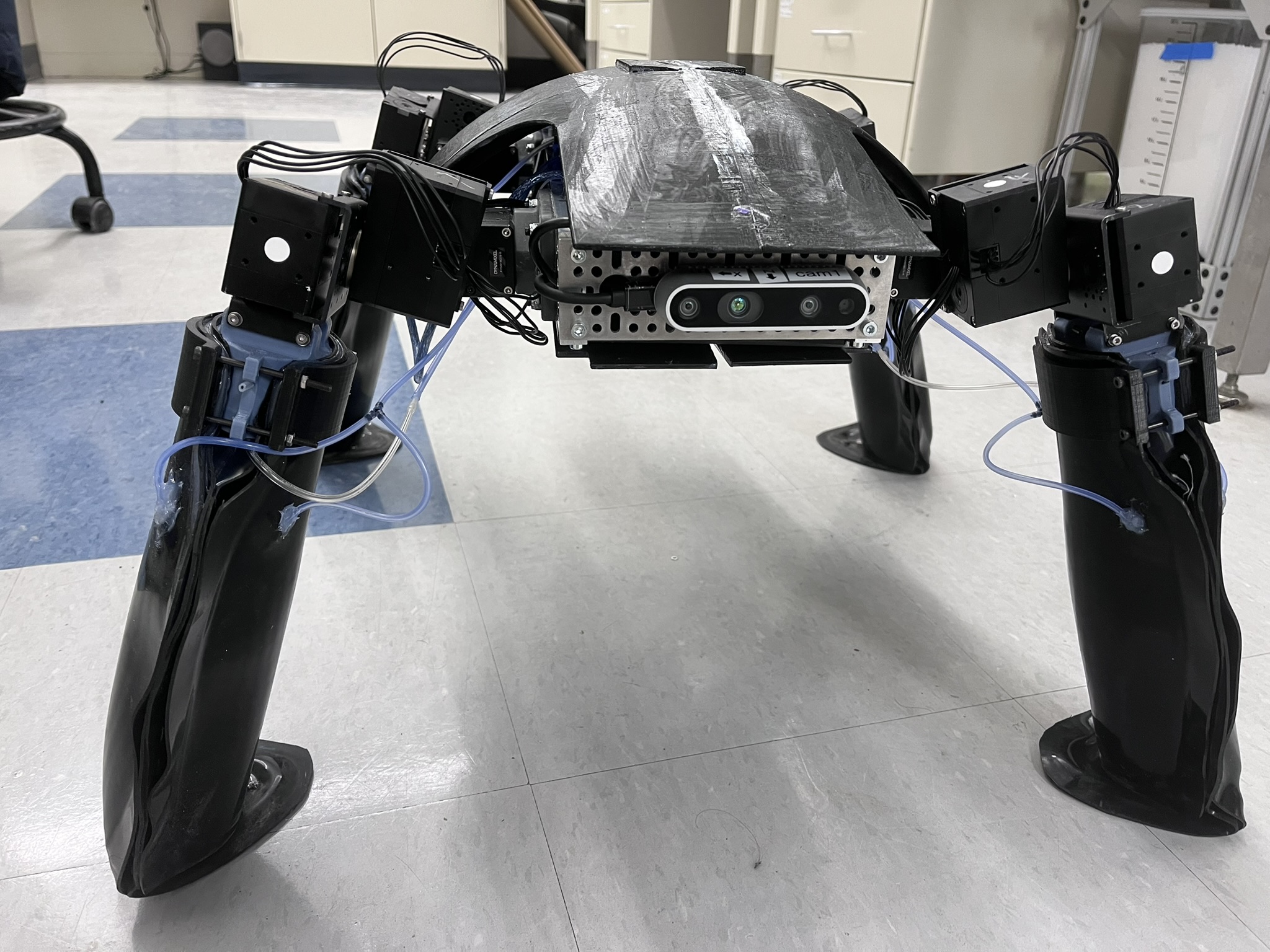

Behind the scenes

Left: the robot guts — Jetson Orin Nano, dual LiPo batteries, Dynamixel XH joints, and the pneumatic PCB that drives pouch inflation and jamming. Right: assembled platform with morphing limbs mounted.

The Faboratory turtle team after the Yale Engineering feature.

Field testing along a muddy creek bank in New Haven (left) and with co-first-author Jue Wang during an outdoor run (right). ArUco markers on the shell were used for ground-truth pose when GPS resolution wasn't enough.

Coverage on @yaleengineering's Instagram featuring the Faboratory and the field deployment.

NVIDIA GTC 2025 in San Jose — sharing the RL + Genesis simulation stack that powered LUART.

Key results

- Sim-to-real fidelity: velocity mapping error 2.29%–7.76% (mean 5.29%) on flat, rigid terrain for walking; 1.00%–5.20% (mean 3.41%) for crawling.

- Gait repertoire: forward/backward walking and crawling, omnidirectional translation, in-place turning (CW/CCW), figure-eight curve turns, and dynamic walk↔crawl transitions — all from a single learned policy.

- Energetics: an order-of-magnitude reduction in cost of transport compared to our previous open-loop ART, across flat ground, mud, grass, and gravel.

- Field robustness: multi-terrain courses on concrete, mud, grass, gravel, rain-softened soil, and streambeds; navigates under obstacles, climbs curbs, and reorients with its backward gait in confined spaces.

Publication

- J. Wang*, M. Jiang*, L. A. Ramirez, B. Yang, M. Zhang, E. Figueroa, W. Yan, R. Kramer-Bottiglio, “Surrogate compliance modeling enables reinforcement learned locomotion gaits for soft robots,” 2025. *Equal contribution. [pdf]